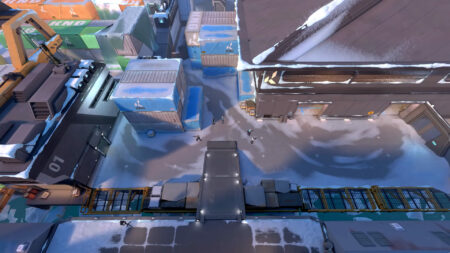

Icebox is a map with multiple long lines of sight which means it’s necessary to get good information.

Containing many corners and hidey-holes, you’ll need a decent chunk of utility to clear out these angles.

This means you have to pick the best initiators on Icebox to net yourself and your squad a win. But who are the most suited agents for the job?

Initiators aim to facilitate attacks on site by flushing enemies out of hiding spots and corners in order to assist the team when charging into battle. This is done through information utility like recons, damaging util, or flashes.

With Icebox being one of the larger maps in Valorant, you need initiators to be able to push through to a site or retake it.

GET YOURS TODAY: Valorant US$25 Gift Card |

In this ONE Esports guide, we’ll look at the best initiator agents with whom you can attack and defend alike with on Icebox. Icebox is a map which has seen the usage of two initiators quite frequently on a team.

The best initiators on Icebox to entry or retake with

Sova

Sova is the initiator of choice for Icebox for a variety of reasons. With there being so many nooks and crannies around the sites for defenders to play around, his Recon ability is the perfect counter.

Sova is the strongest of the info-initiators, and his utility provides valuable info and damage alike for the team.

His Shockdarts can also be great to clear out popularly played spots, often in conjunction with a Viper molly. They are also super useful when attempting to play post-plant. We have some great lineups for Icebox courtesy of the Sova god himself — Average Jonas.

| |

GET YOURS TODAY: Valorant US$100 Gift Card |

Sova’s Drone is also great for spotting enemies on defender sites, as well as during a retake situation. It is the most precise info-giving tool compared to Fade’s Prowlers or Skye’s Trailblazer.

Finally, his ultimate, Hunter’s Fury, is an incredibly versatile tool. You can tag a player with your drone, ult them, use it in post plant to deny the defuse of the spike, and even counter ultimates like Killjoy’s Lockdown.

Gekko

Gekko, is one of the most versatile agents in Valorant. His entire kit comes with a variety of uses and he should be a major part of the meta for the 2024 VCT.

His basic free ability, Dizzy, is a great flash that provides info since enemies in its line-of-sight will need to break it or risk being blinded. You can also pick Dizzy up again and reuse her as many times after a short recharge duration.

Moshpit, his second ability, functions great as a way to clear out corners or play postplant. A molly that does damage over time, Mosh is another multipurpose tool in Gekko’s kit.

| |

GET YOURS TODAY: Valorant US$10 Gift Card |

Gekko’s Wingman is of course what he’s best known for. Whether you want to plant the spike without heading onto site or take a flight while your buddy sits on the defuse, Wingman is the friend you need at your side.

He can also be used to clear out some angles and if enemies are in his path, he will concuss them unless broken. Like Dizzy, Wingman is also reusable if picked up again.

Gekko’s ultimate, Thrash, is an ult that melds Sova’s drone and Killjoy’s lockdown. When ready, you can send Thrash on an expedition, and if you detonate her next to enemies, they will be unable to run away or shoot for a duration. Thrash can also be picked up and reused, but only once.

Fade

While Fade is not chosen on Icebox nearly as much as Sova, she’s still a good choice when paired with Raze, who might be picked over Jett. Her utility is great by itself but can also pull off some nasty combos with Raze.

Her standard rechargeable ability, Haunt, is similar to a reveal, tagging players in its radius who are visible. It also marks them, ensuring that they leave a trail that can be tracked for a few seconds after being marked.

Fade’s second ability is called Prowlers, which can be used like a Sova drone, albeit at ground level to hunt enemies and clear corners.

GET YOURS TODAY: Valorant US$50 Gift Card |

Aside from being able to check areas, if an enemy is marked, Fade’s prowlers will track them down, and if the enemy does not break them, they will lose hearing and get short-sighted for some time.

Fade’s Seize is the ability that makes her a perfect partner for Raze. Seize can be lobbed at enemies, and if it connects, will hold them within a small radius for some time. If you can throw a Raze nade at them, it translates to free kills. You can also combine a Seize with mollies, shock darts, and the like.

Fade’s ultimate — Nightfall — is also great to find information whether you’re attacking or defending. Upon release, it will Mark all enemies in the area and tell you how many are there. It also distorts the sound and vision of enemies caught by the ult, similar to the effect of the prowlers.

Kayo

The final entry on our list, Kayo is often seen chosen on Icebox as a second initiator alongside Sova or Fade. His kit is great to shut down enemy utility and to help duelists attack thanks to his flashes.

Kayo’s free ability that recharges is the Zero Point, a knife that prevents enemies from using their abilities if they are caught within its radius. While breakable, the sound cue could help reveal where enemies are playing, and if unbroken, it will tell you exactly how many enemies you’ve suppressed.

His kit comes with two flashes which can be purchased each round. They are super useful to attack onto site or in retake situations. Kayo pop flashes are some of the most difficult to dodge in-game.

His Fragment is a molly that, similar to Gekko’s Mosh or Sova’s Shock Darts can be used to clear crucial angles out without peeking them or in post-plant.

Finally, his ultimate — Null CMD — shuts down all enemy abilities in its radius for the ults duration. This can be important to deny attacks, facilitate retakes, counter post-plant, and more. Kayo is combined with other initiators, and Sova-Kayo is arguably he most popular initiator pairing seen on this map.

Just a heads up, some of the links on ONE Esports are affiliate links. This means if you click on them and make a purchase, we may earn a small commission at no additional cost to you. It’s a way for us to keep the site running and provide you with valuable content. Thanks for your support!